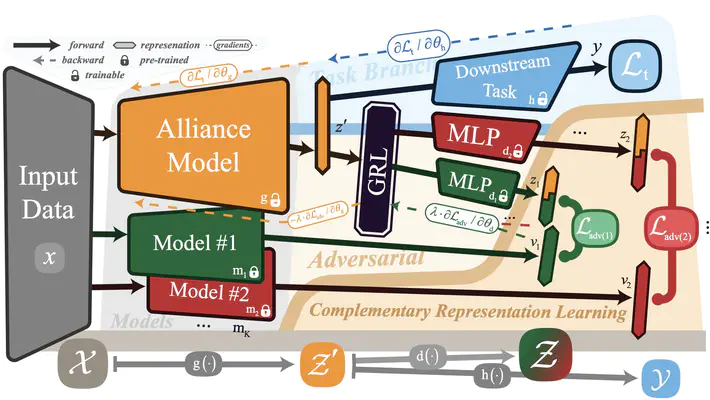

The overview of ACoRL framework

The overview of ACoRL frameworkAbstract

Single-model systems often suffer from deficiencies in tasks such as speaker verification (SV) and image classification, relying heavily on partial prior knowledge during decision-making, resulting in suboptimal performance. Although multi-model fusion (MMF) can mitigate some of these issues, redundancy in learned representations may limits improvements. To this end, we propose an adversarial complementary representation learning (ACoRL) framework that enables newly trained models to avoid previously acquired knowledge, allowing each individual component model to learn maximally distinct, complementary representations. We make three detailed explanations of why this works and experimental results demonstrate that our method more efficiently improves performance compared to traditional MMF. Furthermore, attribution analysis validates the model trained under ACoRL acquires more complementary knowledge, highlighting the efficacy of our approach in enhancing efficiency and robustness across tasks.