LLAM

The Lab of Large Audio Model (LLAM) is committed to create innovative solutions that enhance privacy, security, and efficiency in decentralized and complex systems.

Recent News

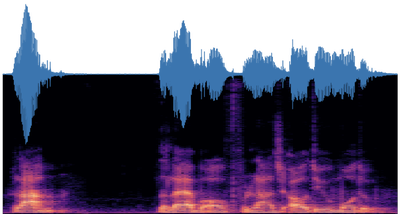

[28/05/2026] $\bullet$ We are pleased to announce that our paper, “Anti-Aliasing-Aware BigVGAN: Spectral Inversion and Frequency Shuffle for Fast High-Fidelity Neural Audio Vocoding,” has been accepted to JCC 2026! This work studies the unique frequency composition patterns of vocoder generator features and improves BigVGAN by removing anti-aliasing filters in lower-rate blocks, adding spectral inversion modules, and introducing frequency shuffle upsampling. With these modifications, the vocoder achieves better reconstruction quality while delivering faster synthesis speed for audio generation.

[01/05/2026] $\bullet$ We are thrilled to announce that our latest work, “DIVA: Decoupling Visual Representations for Unified Multimodal Understanding and Generation,” has been accepted to ICML 2026! Addressing the pain point where “understanding” and “generation” tasks in unified multimodal models interfere with each other due to differing feature requirements, we propose the DIVA post-training framework. By cleverly decoupling visual representations into “shared” and “unique” information, DIVA transforms conflict into synergy, achieving dual leaps in performance for visual understanding and generation!

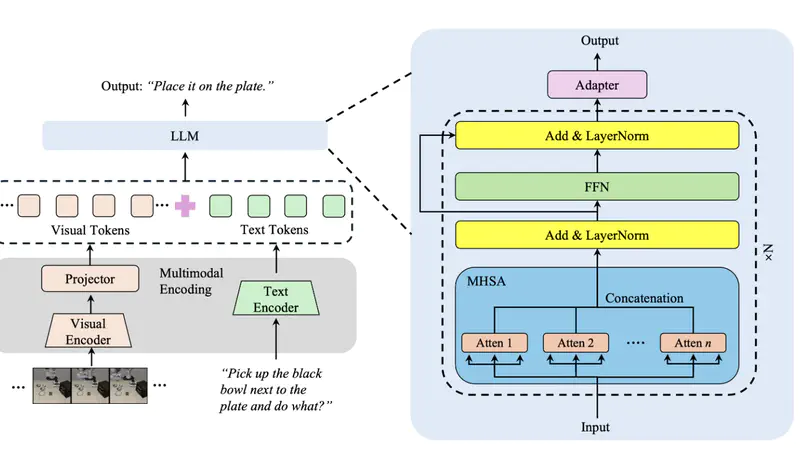

[21/03/2026] $\bullet$ We are proud to announce that our paper, “Evolvable Embodied Agent for Robotic Manipulation via Long Short-Term Reflection and Optimization,” has been accepted to IJCNN 2026! This work proposes an evolvable embodied agent framework that combines large vision-language models for richer environmental interpretation and policy planning with a novel long short-term reflective optimization mechanism.

[17/03/2026] $\bullet$ We are thrilled to announce that our paper, “VLA-InfoEntropy: A Training-Free Vision-Attention Information Entropy Approach for Vision-Language-Action Models Inference Acceleration and Success,” has been accepted to ICME 2026! This work introduces a novel training-free method that leverages image entropy to quantify visual token informativeness and attention entropy to capture semantic relevance, enabling dynamic inference acceleration for Vision-Language-Action models by reducing redundancy while maintaining critical spatial, semantic, and temporal cues. Extensive experiments demonstrate significant improvements in inference efficiency and performance over existing approaches.

[11/03/2026] $\bullet$ We are thrilled to share that our latest research paper, titled “From Inheritance to Saturation: Disentangling the Evolution of Visual Redundancy for Architecture-Aware MLLM Inference Acceleration,” is set to be accepted and presented at the upcoming 64th Annual Meeting of the Association for Computational Linguistics (ACL 2026).

Research Direction

Federated Large Models

Research on Federated Large Models focuses on advancing privacy-preserving distributed learning frameworks that enable collaborative training of large-scale AI models across decentralized data sources. This direction integrates cutting-edge techniques in federated learning, differential privacy, and model compression to address challenges in data silos, communication efficiency, and heterogeneous system environments. Key applications include cross-institutional medical analysis, secure financial risk prediction, and edge-device personalized AI services while ensuring strict compliance with data governance regulations.

Trusted Computing

Research on Trusted Computing aims to build secure and verifiable computing systems through hardware-rooted security mechanisms, enclave-based confidential computing, and decentralized trust verification protocols. We focus on designing architectures that guarantee data integrity, execution traceability, and resistance to adversarial attacks across cloud-edge environments. Our innovations are applied to blockchain consensus optimization, privacy-preserving biometric authentication, and AI model provenance tracking, establishing trust foundations for next-generation mission-critical systems.

Graph Computing

Research on Graph Computing explores efficient algorithms and systems for analyzing complex relational data at web-scale. By developing novel graph neural network architectures, dynamic subgraph mining techniques, and heterogeneous graph embedding methods to address challenges in billion-edge network processing, real-time knowledge graph reasoning, and multimodal graph representation learning. Applications span social network fraud detection, drug discovery through molecular interaction networks, and smart city traffic optimization systems.

Large Audio Model

Research on Large Audio Models aims to advance the field of audio processing, generation, understanding, and multimodal processing. This research encompasses a wide range of applications, including speech recognition, virtual assistants, music composition, audio synthesis, and more. Within this broad scope, several key areas of focus include: Low resource TTS, Expressive TTS, Voice Conversion, Audio Caption, Speech Security, and Music AI.

Latest Publication

Unified Multimodal models (UMMs) built on a single architecture have shown impressive performance in both understanding and generation. We identify a fundamental challenge that lies in inductive biases induced by distinct supervision signals generation branch prefers high-fidelity, fine-grained representations capable of reconstruction, while the understanding favours semantically discriminative embeddings that remain invariant to task-irrelevant factors. Consequently, optimizing these complementary but non-equivalent objectives within a monolithic backbone leads to mutual impairment instead of enhancement. In this paper, we first analyze the root cause of this interference in unified backbones and reveal a complementary structure in their internal representations. Motivated by the observation, we propose DIVA, a self-improved post-training framework that transforms the representation divergence into interior synergy. By explicitly factorizing the visual representation into shared and unique components based on two complementary information flow, DIVA enables both the understanding and generation branches to achieve beneficial transferring while preserving the integrity of unique information from cross-flow interference via mutual information estimation. Despite its generality, our method consistently achieves improvements across visual understanding (+7.82%) and generation (+8.46%).

Recently, video language models (VLMs) have been applied in various fields. However, the visual token sequence of the VLM is too long, which may cause intolerant inference latency and GPU memory usage. Existing methods propose mixed-precision quantization to the key-value (KV) cache in VLMs based on token granularity, which is time-consuming in the search process and hardware inefficient during computation. This paper introduces a novel approach called WindowQuant, which employs window-adaptive mixed-precision quantization to optimize the KV cache. WindowQuant consists of two modules, window-level quantization search and window-level KV cache computation. Window-level quantization search quickly determines the optimal bit-width configuration of the KV cache windows based on the similarity scores between the corresponding visual token windows and the text prompt, maintaining the model accuracy. Furthermore, window-level KV cache computation reorders the KV cache windows before quantization, avoiding the hardware inefficiency caused by mixed-precision quantization in inference computation. Extensive experiments demonstrate that WindowQuant outperforms state-of-the-art VLM models and KV cache quantization methods on various datasets.

Recent & Upcoming Events

JCC 2026 is a premier global forum for sharing cutting-edge research and industrial insights into scalable, intelligent JointCloud computing. The conference will be held on 27-30 July, 2026 in a hybrid format, with the physical venue located in Fukuoka, Japan. We invite original contributions in JointCloud computing and at its intersection with advanced AI technologies. Modern enterprises increasingly rely on multi-cloud and hybrid-cloud strategies to orchestrate services across providers, optimize performance, and avoid vendor lock-in. Yet, this distributed paradigm introduces significant challenges in interoperability, security, management, scalability, and intelligent orchestration. JointCloud encompasses hybrid-cloud, multi-cloud, federated cloud, cloud-edge integration, computing network convergence, and blockchain-based systems, serving as the architectural backbone for next-generation cloud ecosystems. JCC 2026 is committed to fostering a leading global platform for exchanging cutting-edge research, sharing practical insights, and identifying future directions that will define the era of scalable, intelligent computing.

ICME 2026 will bring together leading researchers and practitioners to share the latest developments and advances in the discipline. Featuring high-quality oral and poster sessions, world-class keynotes, exhibitions, demonstrations, and tutorials, the conference will attract leading researchers and global industry figures, providing excellent networking opportunities. In addition, exceptional papers and contributors will be selected and recognized with prestigious awards.

The Association for Computational Linguistics (ACL) was established in 1962 and is the premier conference in the field of natural language processing (NLP) and computational linguistics. It is organized annually by the Association for Computational Linguistics. The ACL is one of the most influential and dynamic international academic organizations in the world. It holds an annual conference every summer, providing a platform for scholars to present papers and share the latest research findings. The association boasts members from over 60 countries and regions worldwide, representing the highest level of international computational linguistics in the NLP field.

IJCNN is the premier international conference in the area of neural networks theory, analysis and applications. Since its inception, IJCNN has been playing a leading role in promoting and facilitating interaction among researchers and practitioners, and dissemination of knowledge in neural networks and related facets of machine learning. And Rome with its history and geographical position will further contribute to grow and maintain the role of the IJCNN as a prominent platform for exchange of knowledge in neural networks and artificial intelligence.

Design of complex artifacts and systems requires the cooperation of multidisciplinary design teams. The 2026 29th International Conference on Computer Supported Cooperative Work in Design (CSCWD 2026) provides a forum for researchers and practitioners involved in different but related domains to confront research results and discuss key problems. The scope of CSCWD 2026 includes the research and development of collaboration technologies and their applications to the design of processes, products, systems, and services in industries and societies. Collaboration technologies include theories, methods, mechanisms, protocols, software tools, platforms, and services that support communication, coordination and collaboration among people, software and hardware systems. Related fields of research include human-computer interaction, business process management, collaborative virtual environments, enterprise modeling, s ecurity and privacy, as well as social aspects and human factors related to collaboration and design.